2026-05-05. Nomoyu Daily for Indie Developers (Issue 353)

📰 News

After the carnival of 200,000 agents, the real problem remains unsolved

The most easily overestimated AI product this year is not the large model, but the agent.

Especially the kind where a group of agents @ each other on screen, assign tasks to each other, and summarize for each other, looking like a digital company is holding a meeting.

But the problem is: meetings do not equal collaboration, and screen spam does not equal productivity.

Maltbook is a very typical signal.

A group of AI agents gathered together and formed their own “social network,” leaving 14 million comments, 2.3 million posts, and about 200,000 verified agents interacting back and forth. They even seemed to be developing some kind of “religion.”

It is very sci-fi and very exciting.

But what is really worth watching out for is not “AI is about to awaken,” but another colder fact: many of these agents are only imitating the behavioral patterns of human social media.

They can reply, echo, and create buzz. But they are not truly collaborating.

There is a very sharp phrase in the interview: this is not collaboration, but theater of collaboration.

That phrase can almost summarize most agent products today.

What you see is a pile of roles performing: researcher agent, writing agent, execution agent, review agent. They take turns speaking, looking very much like an efficient team.

But without shared state, a governance layer, reliable identity, permission boundaries, and semantic validation of tasks, they are essentially just “prompts with job titles.”

The real agent revolution is not making AI better at chatting, but making AI truly able to coordinate under constraints to complete complex tasks.

This is also the most valuable part of the Cisco Outshift interview: they did not treat agents as smarter chatbots, but as a new kind of network entity.

This kind of entity is strange.

It is like software, because it runs in the cloud and in code. It is also like a person, because it has identity, capabilities, permissions, goals, and behavioral boundaries.

So future agent systems cannot be solved by “one stronger large model” alone. They need a new layer of infrastructure: who can find whom, who can call whom, who can see whom, and whether someone can be revoked when something goes wrong.

This does not sound sexy, but it is what really decides whether AI can enter enterprises and handle serious work.

Many so-called agents today are fixed workflows: first search for materials, second write a summary, third generate a report. That is useful, of course, but it is automation, not intelligent collaboration.

The truly valuable scenarios are unknown tasks.

For example, a company suffers a serious IT failure. The cause may be in a Kubernetes cluster, a security attack, an anomaly in observability data, or it may require crisis communication with customers.

At that moment, one universal agent is not enough.

You need an SRE agent, security agent, observability agent, communication agent, root cause analysis agent, and even agents from different vendors, different systems, and different cloud environments to temporarily team up.

They do not follow a dead workflow. They self-assemble, divide work, and adjust based on the situation on the ground.

That is what an agent swarm is.

But here comes the problem: a group of agents that can self-assemble sounds powerful, and also dangerous.

So this judgment from the interview is critical:

An agent without autonomy is useless. An agent with excessive autonomy is a bomb.

What enterprises really need is not “letting AI fly free,” but “releasing AI in a controllable way.”

How do you control it?

First, control connections. Not every agent can talk to every other agent. Just as enterprise networks have segmentation and micro-isolation, agents should enter different “rooms” and communicate only with task-relevant objects.

Second, control identity. Every agent needs a verifiable identity. Not everyone should be able to enter wearing a costume. Otherwise, agent networks will become a paradise for automated scams.

Third, control tool permissions. If you only ask an agent to check the EUR/USD exchange rate, and it suddenly says it also wants to initiate a transaction, the system must be able to judge: no, you have crossed the boundary.

This is semantic-level permission validation.

The most important security question in the future is not only “can it access this tool,” but “why is it accessing this tool in this task?”

This is also where many people underestimate the cost of agents.

Every tool call, every semantic review, every security decision, if all handed to large models, will explode in cost. So small language models will become very important: they do not need to know everything, but they need to be cheap, stable, and reliable in specific roles.

In plain terms, future agent systems will not be like a genius employee. They will be more like a company: with an org chart, access control, meeting rooms, audits, job descriptions, and mechanisms for removing someone at any time.

Many agent products today are at most “employee cosplay.”

There is one final, bigger judgment: the future of agents must be open.

If every company locks its agents inside its own walled garden, the AI world will not become an “intelligent internet.” It will become a pile of new SaaS islands.

The internet is great not because one company invented everything, but because it is open enough, interoperable enough, and extensible enough.

Agents are the same.

The next AI explosion will not happen in a group chat where digital people flatter each other. It will happen in the invisible places: identity, protocols, permissions, semantics, observation, revocation, and coordination.

So when judging whether an agent product is reliable, do not only look at how many roles it has, how many workflows it has, or how many cool flowcharts it shows.

You should ask four questions:

- Can it explain why it did what it did?

- Can it be restricted, audited, and revoked?

- Can it collaborate with other people’s agents?

- When it makes a mistake, can the system keep the damage inside one room?

If not, it is not an agent. It is just a chatroom with an AI label.

🖥️ Software

Ikigai Revelation

Ikigai Revelation is an AI-based personal growth tool that generates personalized reports by analyzing users’ answers across four dimensions, with a Japanese Zen-inspired aesthetic design.

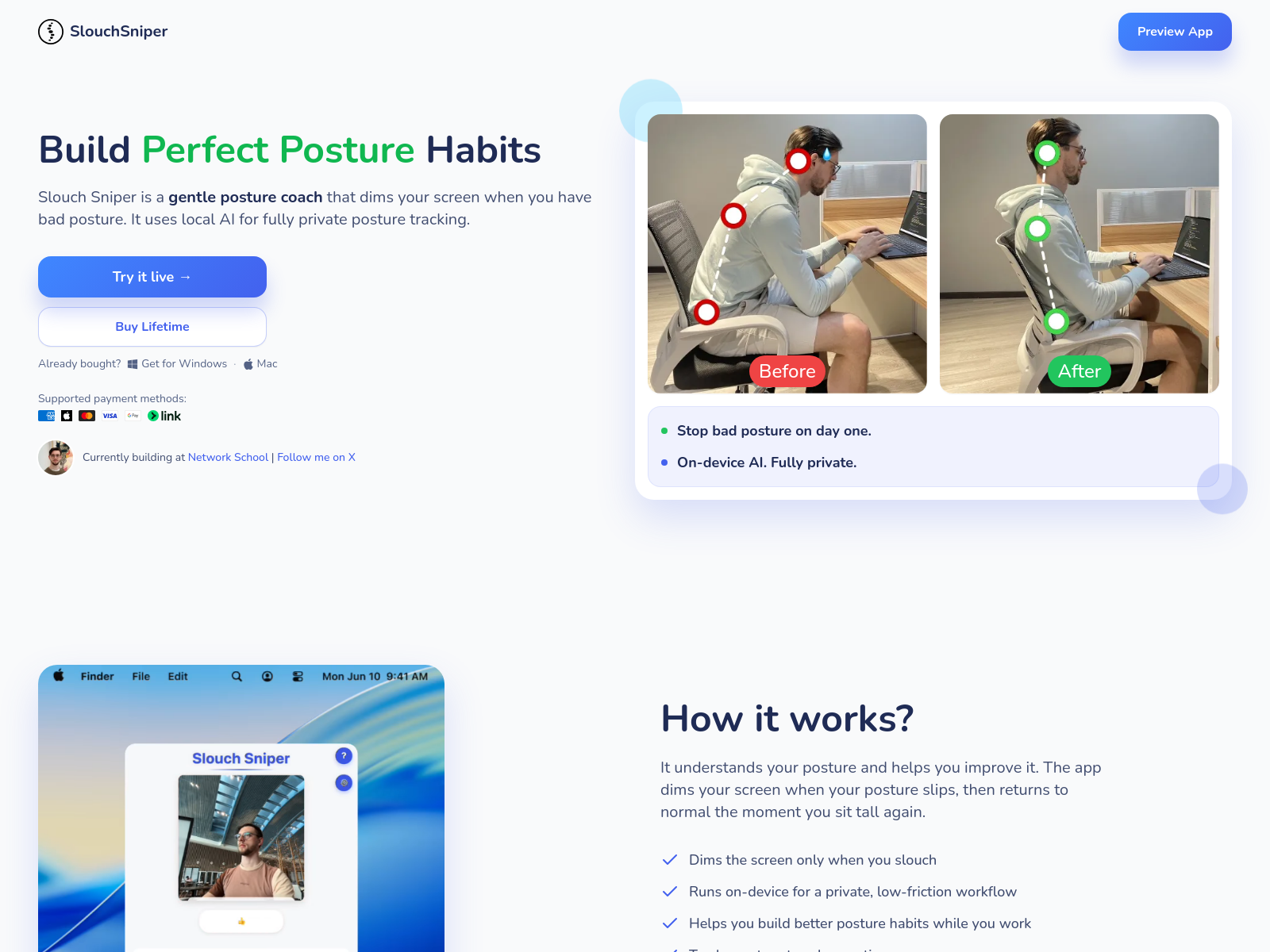

Slouch Sniper

Slouch Sniper is an indie tool app that helps users improve sitting posture through real-time reminders to correct poor posture.

Sumatra PDF

Sumatra PDF is a lightweight PDF reader with newly added features such as command-line tools, Arm 64 support, and dark mode.

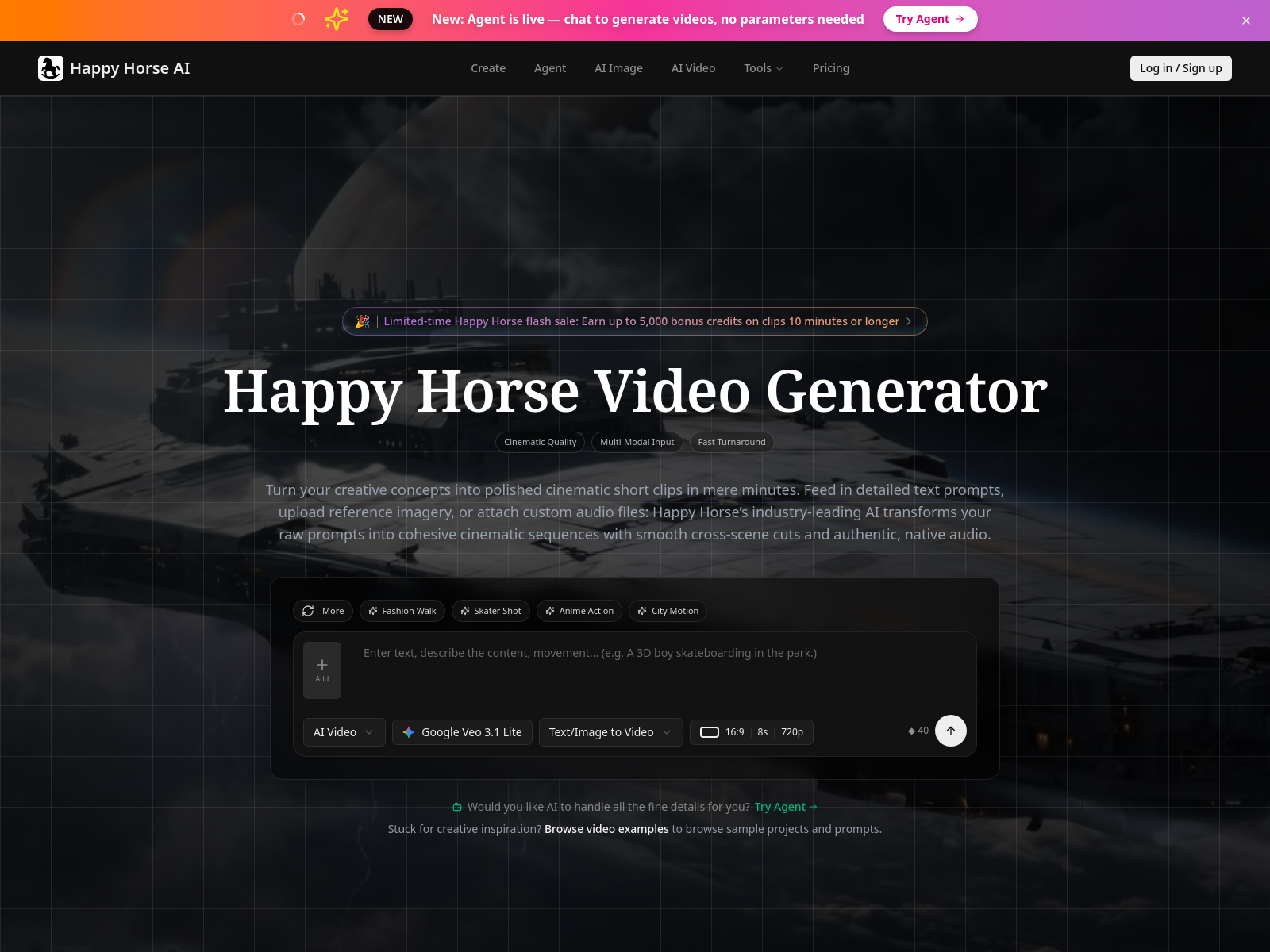

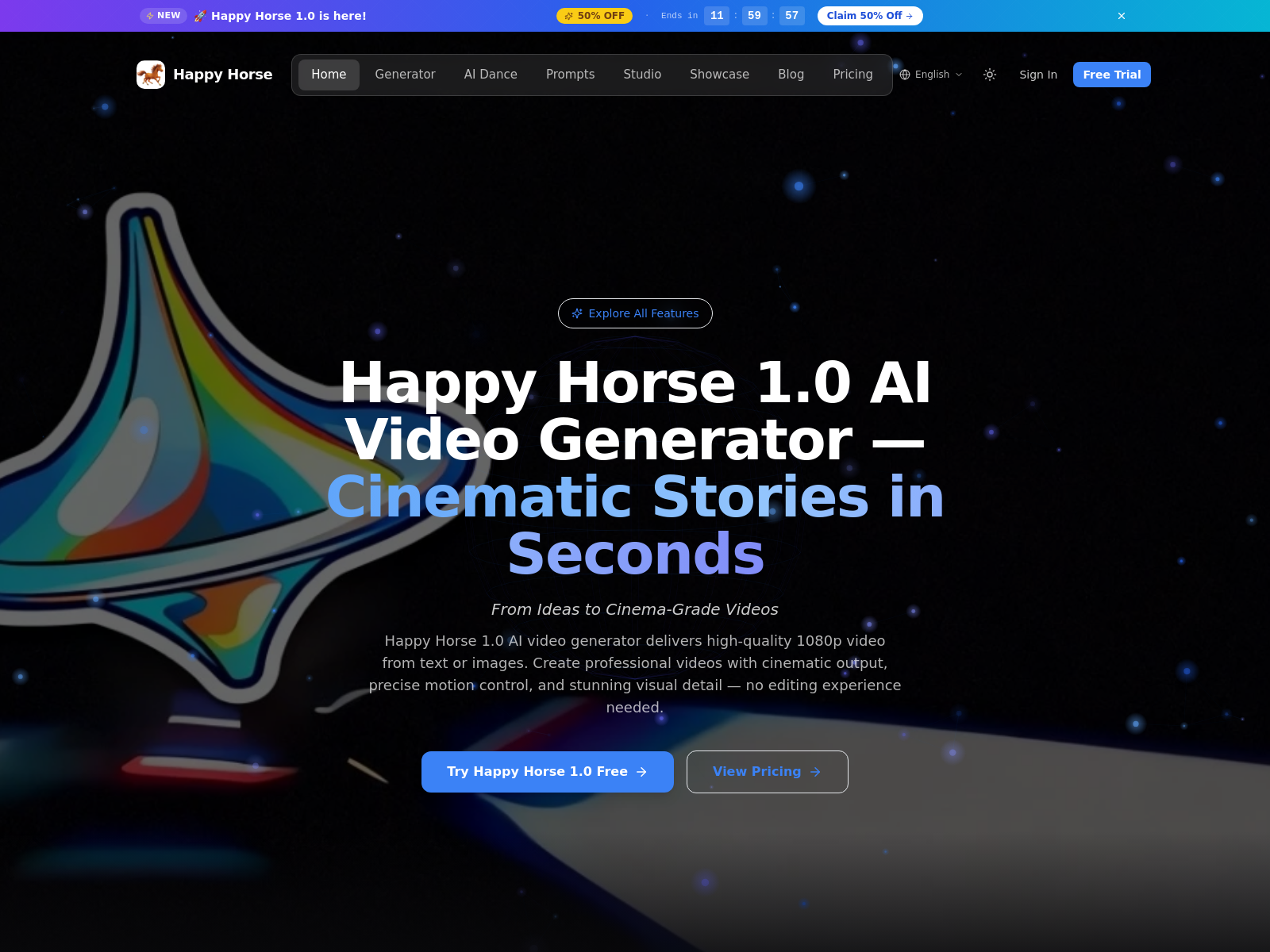

Happy Horse

Happy Horse is an AI video tool supporting multi-shot generation and lip sync. It can compress video production workflows and is suitable for advertising and e-commerce scenarios.

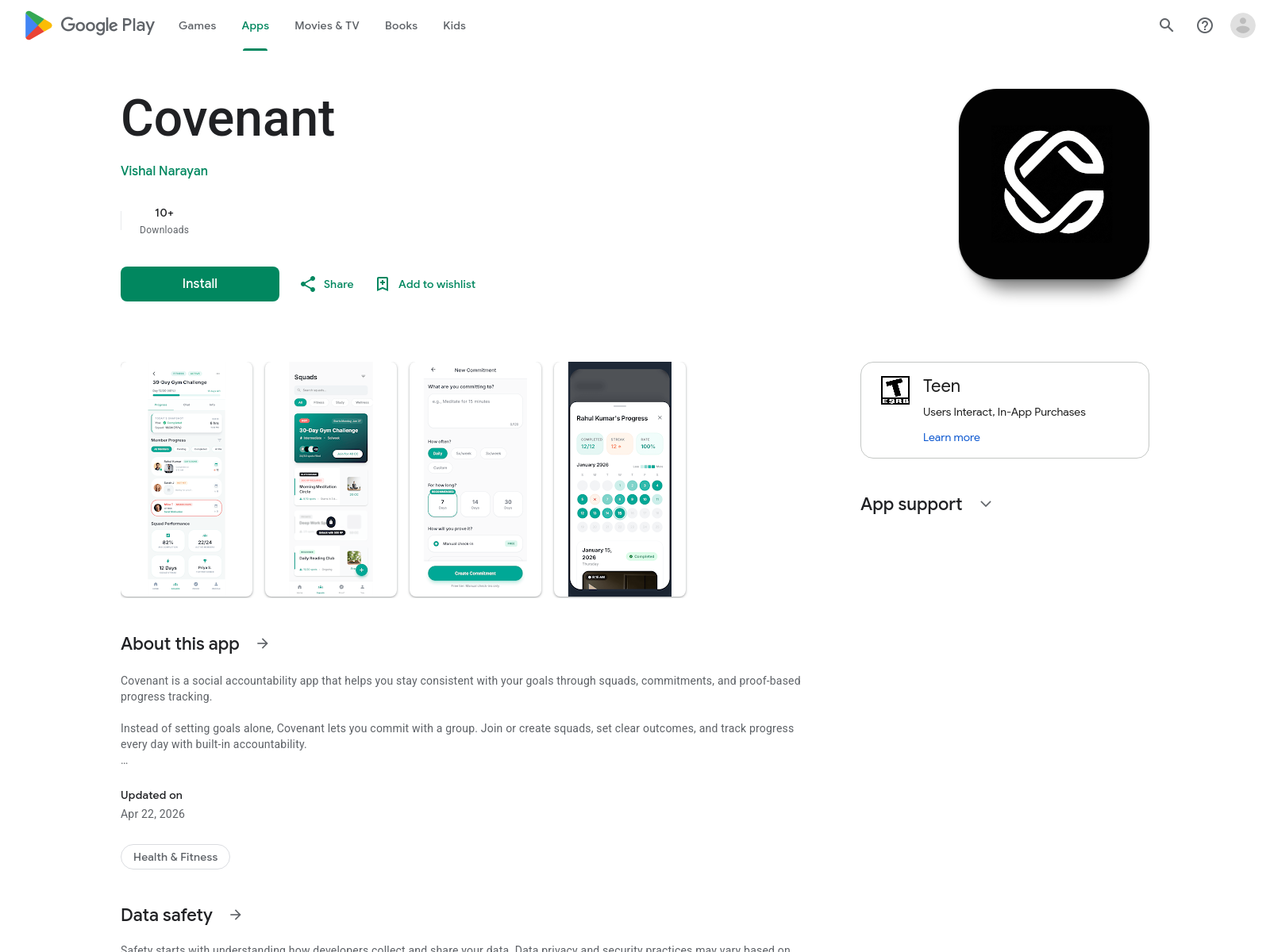

Covenant

Covenant is a habit tracking app that treats goals as self-commitments, using a minimalist interface to help users build a sense of responsibility to themselves.

SleepWave

SleepWave is an offline sleep monitoring app with snoring tracking and sleep cycle analysis. It can replay nighttime recordings and draw noise curves.

avatar moraya

avatar moraya is an AI avatar generator that requires no registration. It supports generating personalized avatars in multiple styles from descriptions or uploaded photos.

Shelf’d

Shelf’d is an indie dating app that builds social connections based on bookshelf content. It hides profile photos to encourage deeper conversation and reduce appearance-based judgment.

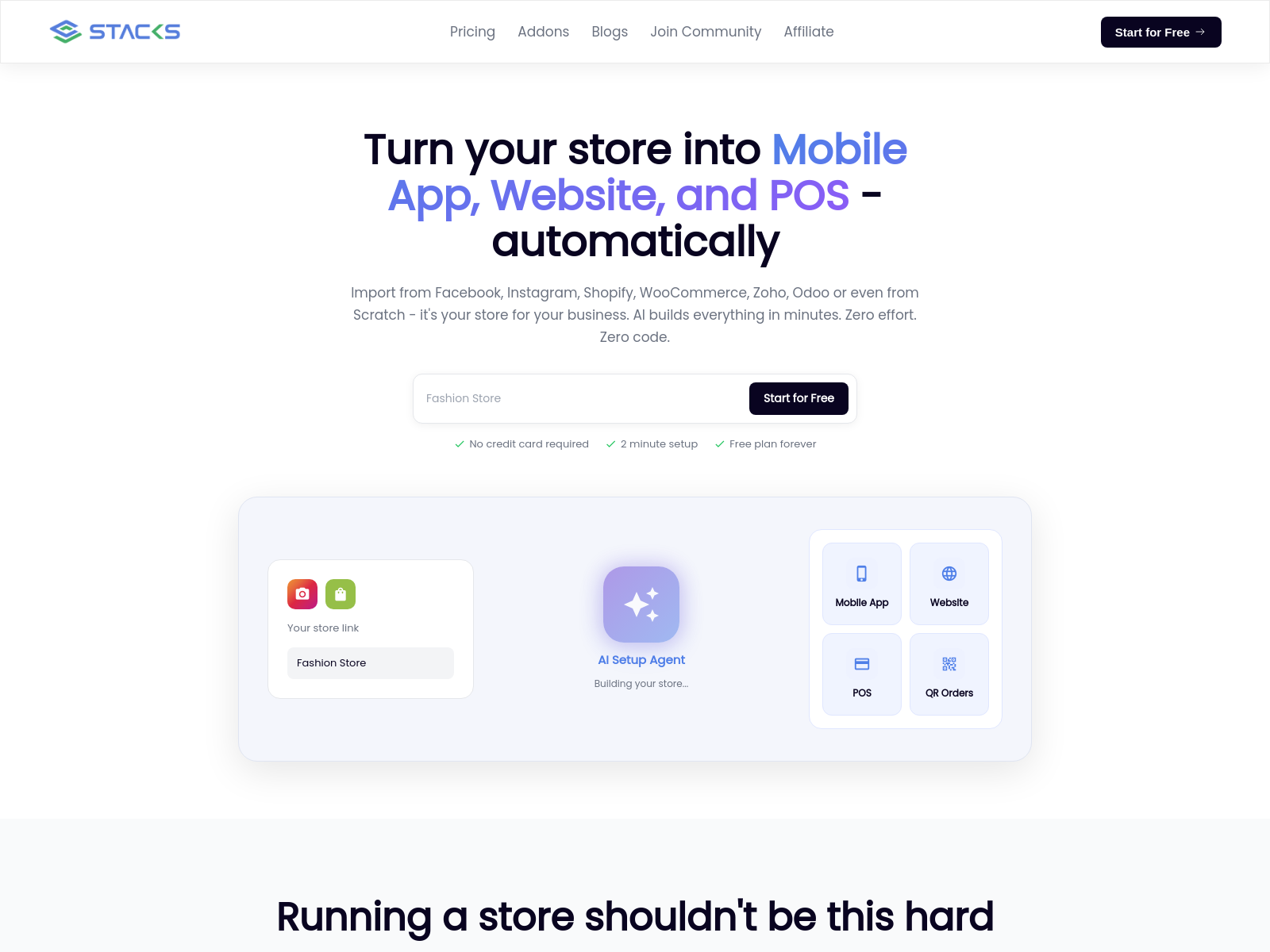

Stacks Market

Stacks Market is an automation tool that can convert Shopify, Instagram, and other stores into mobile apps, websites, and POS systems with one click, completing deployment within two minutes.

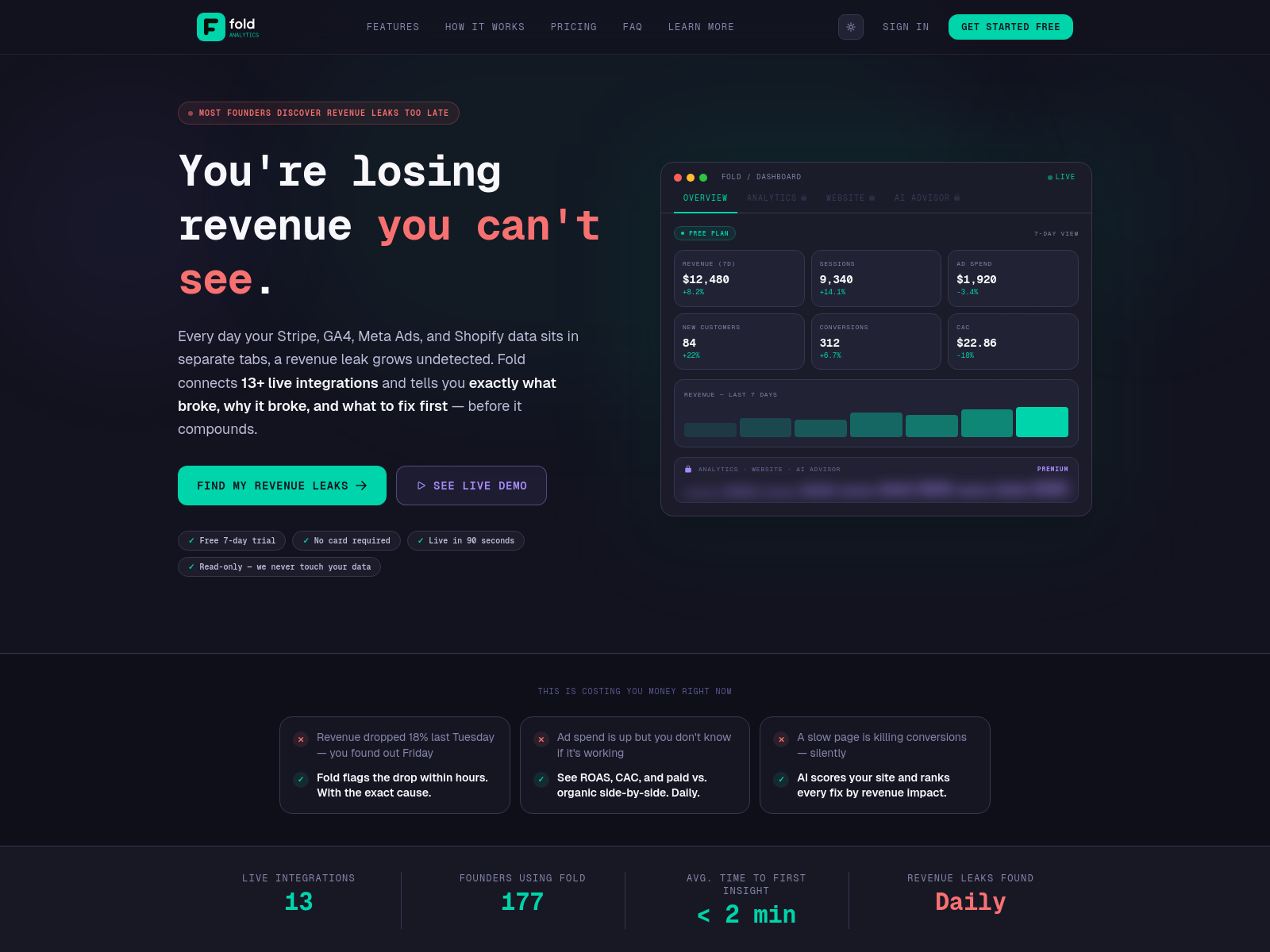

Fold

Fold is a dashboard tool that helps indie developers integrate Stripe, Google Analytics, and ad data to achieve precise conversion analysis and promotion optimization.

Happy Horse

Happy Horse is an AI video generation tool that can generate 720p audio-synchronized videos from text or images, with support for custom voices and scene matching, requiring no post-production editing.

🎮 Games

Vitrified

Vitrified is an indie game whose developer shared two years of development experience and the process of accumulating 2,500 Steam wishlists.

🌐 Websites

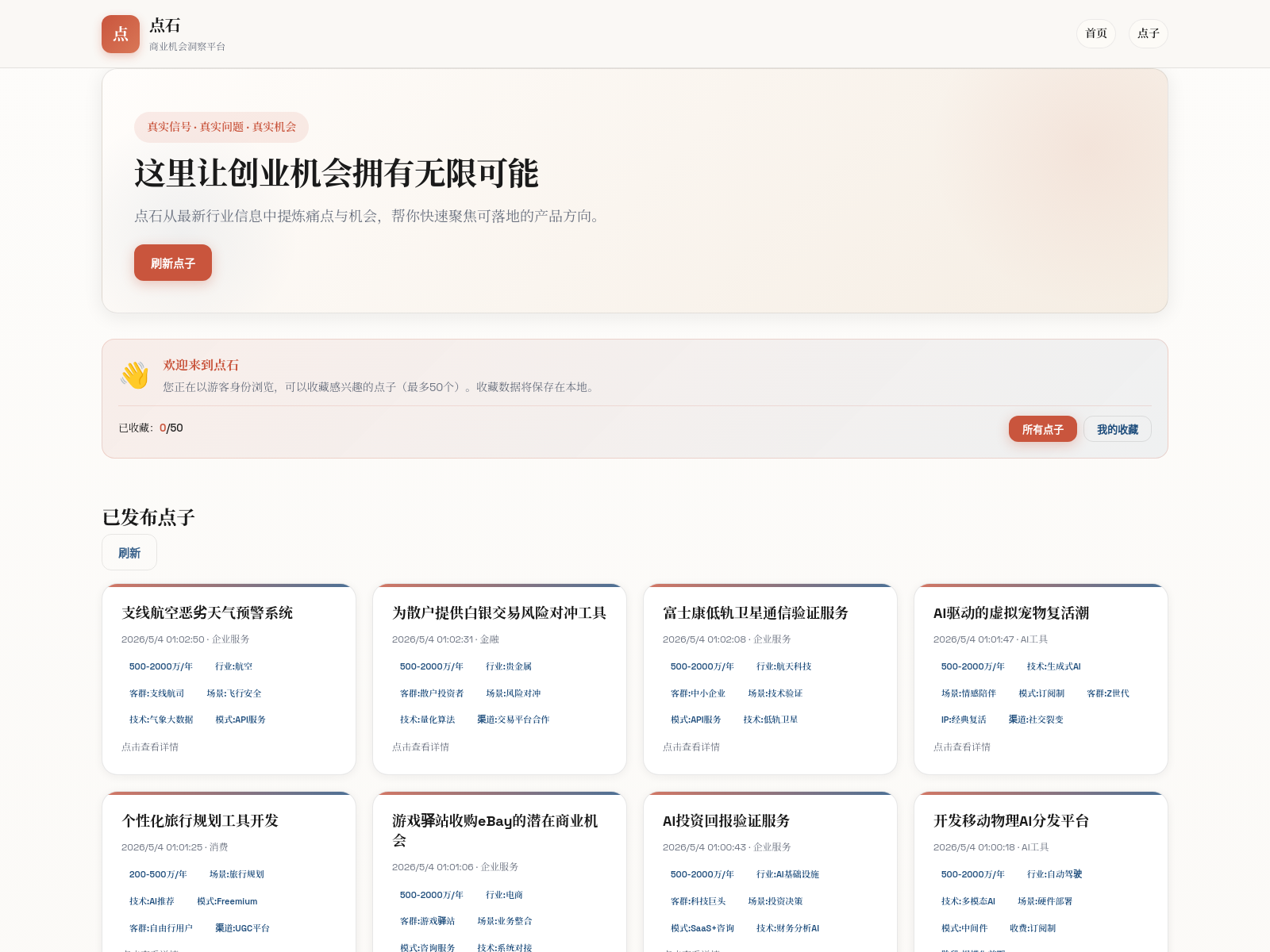

点石网

点石网 is an MVP tool focused on overseas micro-business ideas. It automatically breaks down pain points and monetization methods, helping indie developers quickly identify small, beautiful, and feasible projects.

HappyHorse AI Showcase

HappyHorse AI Showcase is a showcase website based on popular new models, used for beginner practice and model experience.

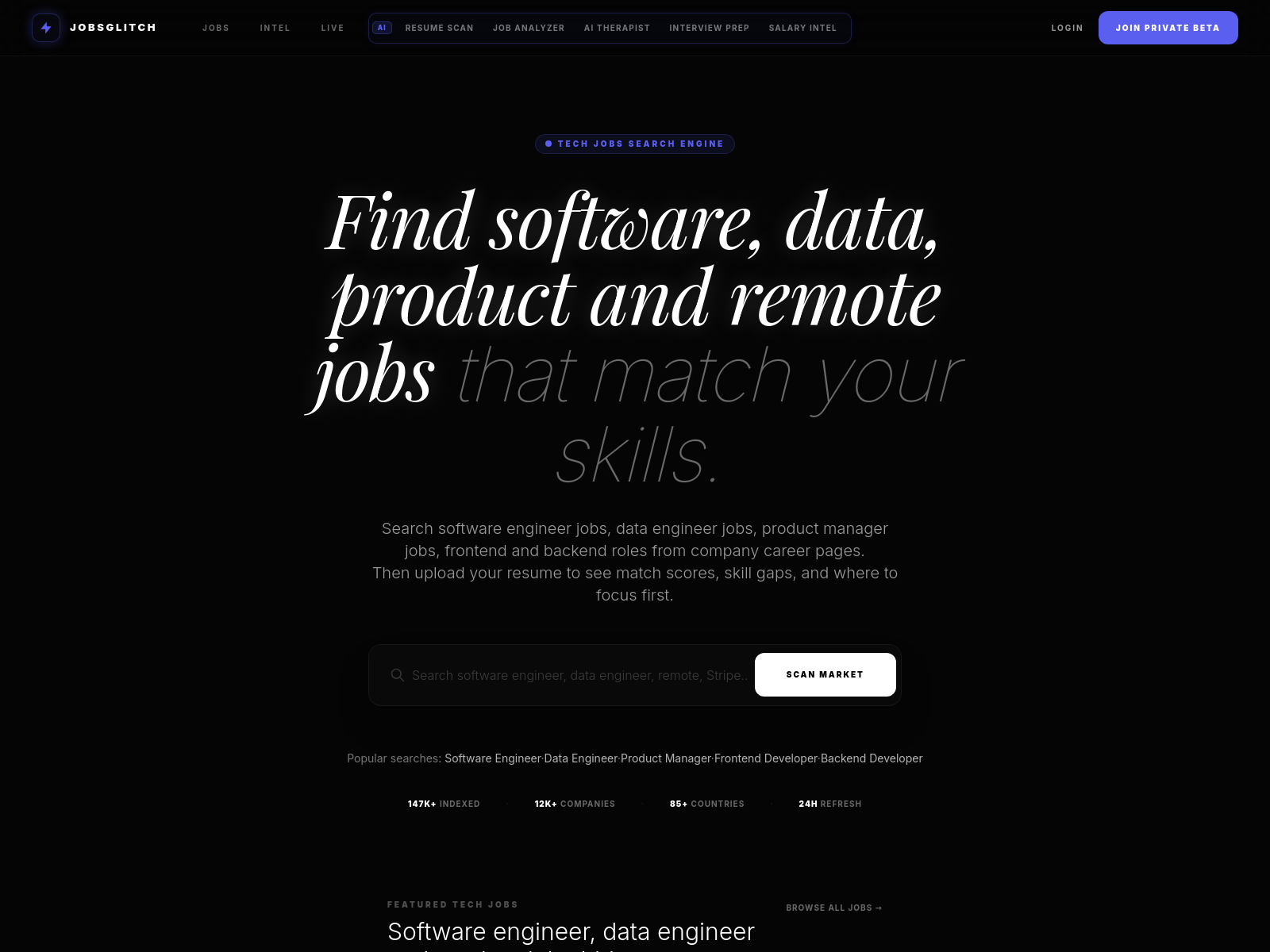

jobsglitch

jobsglitch is a tool that helps job seekers precisely match positions, supporting automatic resume scoring, skill comparison, and salary negotiation simulation.

✍️ Notes

Daily project information:

Website: https://www.nomoyu.com/

RSS: https://www.nomoyu.com/rss/rss.xml

WeChat Official Account: 明航的AI副业

Feel free to connect and exchange ideas

See the website for all links.