2026-05-12. Nomoyu Daily for Indie Developers (Issue 360)

📰 News

Everyone is competing on models, but the real shortage is inference compute

Many people think the AI war is still on the model leaderboard.

But Baseten CEO Tuhin Srivastava sends a much sharper signal: 30x growth over the past year, with the host saying this year’s revenue expectation exceeds $1 billion; more than 95% of tokens come from custom models.

This reveals a more brutal reality: once AI truly enters business, the scarcest thing is not simply having a good model, but being able to run intelligence steadily, cheaply, and continuously.

Models are not the endpoint. Calls are the business

The real AI business does not happen on launch stages. It happens after every user click.

Inference is the process by which a model is called, generates an answer, and completes an action. In the past, everyone watched training: whose parameters were larger, whose leaderboard score was higher. But Tuhin’s judgment feels like a bucket of cold water: if AGI really arrives, the market left at the end will still be inference.

Because once intelligence becomes usable, it will not stay in the lab.

It will enter customer support tickets, medical records, code editors, sales workflows, and education products. Behind every “better answer” are repeated rounds of inference.

Baseten’s 30x annual growth is not just a company story.

It shows AI is moving from “who can build the model” to “who can run the model into the business.”

The real moat is user signals others cannot access

The most worth chewing on in this interview is not compute, but why application-layer companies can still survive.

Tuhin used Abridge as an example. It is an ambient documentation assistant used by doctors and is deeply embedded in hospitals and clinical workflows. How doctors revise notes and what they continue doing in electronic medical record systems after revisions are signals model labs cannot easily obtain.

That is the new moat for application companies: not “I also connected to a large model API,” but “I control a chain of user behavior that only I can see.”

Customer support is the same.

A ticket usually does not end with one answer. It may go through 1, 2, 10, or even 20 actions. Whoever can see those actions can use feedback to post-train the model, making it faster, cheaper, and more accurate on specific tasks.

So the first batch of dangerous AI companies are not the ones with weak models, but the ones with no user signals, no workflow depth, and no feedback loop.

Without a loop, an AI app is just a pretty shell.

Tuhin has an even harsher suggestion: before product-market fit, do not rush into post-training. First use the strongest model to prove value, then talk about optimization.

Otherwise, what you are training is not a moat. It is an illusion.

Inference compute shortage is becoming a new ticket to entry

The harder layer is compute.

Tuhin says the market still does not fully understand how tight supply is. Baseten runs large clusters itself, often with utilization in the mid-90s. It is deployed across 90 clusters in 18 clouds and can onboard a new provider in a new country into its inference network in half a day.

That sounds strong, but they still hold capacity meetings every day.

The true bottleneck is not only whether there are GPUs, but who can run data centers reliably and who understands inference-service SLAs.

This is changing the rules of competition.

Pure GPU as a Service can easily become a commodity. But inference services with a software layer are sticky. The interview mentioned that Baseten has had no churn among its top 30 customers, with annualized net revenue retention around 400%.

What is being sold behind that is not cards, but a full system made of model deployment, latency, failover, custom optimization, data retention, and enterprise requirements.

Procurement is even more extreme.

To get 1,024 B200s from a good cloud provider, you may need to sign a 3-to-5-year contract and prepay 20% to 30% of total contract value.

That means AI infrastructure is no longer only a technical competition. It is also a competition of capital structure, supply chain, operations culture, and nerve.

Compute is not background scenery. Compute itself is becoming a strategic asset.

The cheaper AI gets, the more humans will use it

Many people misunderstand one thing: if models get cheaper, AI costs will fall.

Tuhin observes the opposite. The cheaper inference gets, the more developers will stuff intelligence into products. Agents will run longer, try more paths, and make more intermediate judgments, just to deliver a better result to the user.

This is the AI version of Jevons paradox: the cheaper intelligence gets, the more it is consumed.

Users will not say “this answer is cheap enough.” Users will say “I want a better answer.”

Enterprises also will not use less AI because it gets cheaper. They will embed it into more workflows.

Better answers bring better experiences; better experiences bring more revenue; more revenue then buys more inference.

That is what makes the inference market frightening.

It is not a one-time purchase. It is a demand curve that can amplify itself.

The people truly eliminated are those still stuck at the demo stage

This interview offers a sharp reminder for AI practitioners and founders: do not obsess over “which model I connected.”

Models will change, leaderboards will change, chips will change, and prices will change.

What is truly scarce is three things: whether you have unique user signals; whether you have a loop that returns those signals to the model; and whether you can run inference reliably inside real business.

AI will not reward only people who can write prompts.

It will reward people who can design workflows, capture feedback, reduce cost, and improve reliability.

Future companies will not simply replace software with AI interfaces. They will embed intelligence into every action. Doctors will have agents beside them, students will have agents beside them, and salespeople, support teams, and programmers will all have agents beside them.

In the interview, this was summarized as: everyone has a concierge service.

But for old software companies, this may also be an extinction moment.

Not because AI suddenly kills you, but because your competitor embeds intelligence into the workflow first and uses the user signals generated every day to train the next version of itself.

In the AI era, the most valuable thing is not “I have a model.”

It is: I have scenes others cannot access, feedback others cannot see, and inference capabilities others cannot run.

🖥️ Software

Pasly

Pasly is a macOS clipboard manager with multi-device sync, allowing users to quickly save and revisit copied content.

DevGlish

DevGlish is a macOS menu-bar tool that helps non-native developer speakers look up English technical expressions, pronunciation, and Chinese interference reminders to improve English communication in teams.

TranscriptAPI

TranscriptAPI is a reliable API for retrieving YouTube video transcripts, supporting fast access to complete timestamped subtitles with a response time of only 49 ms.

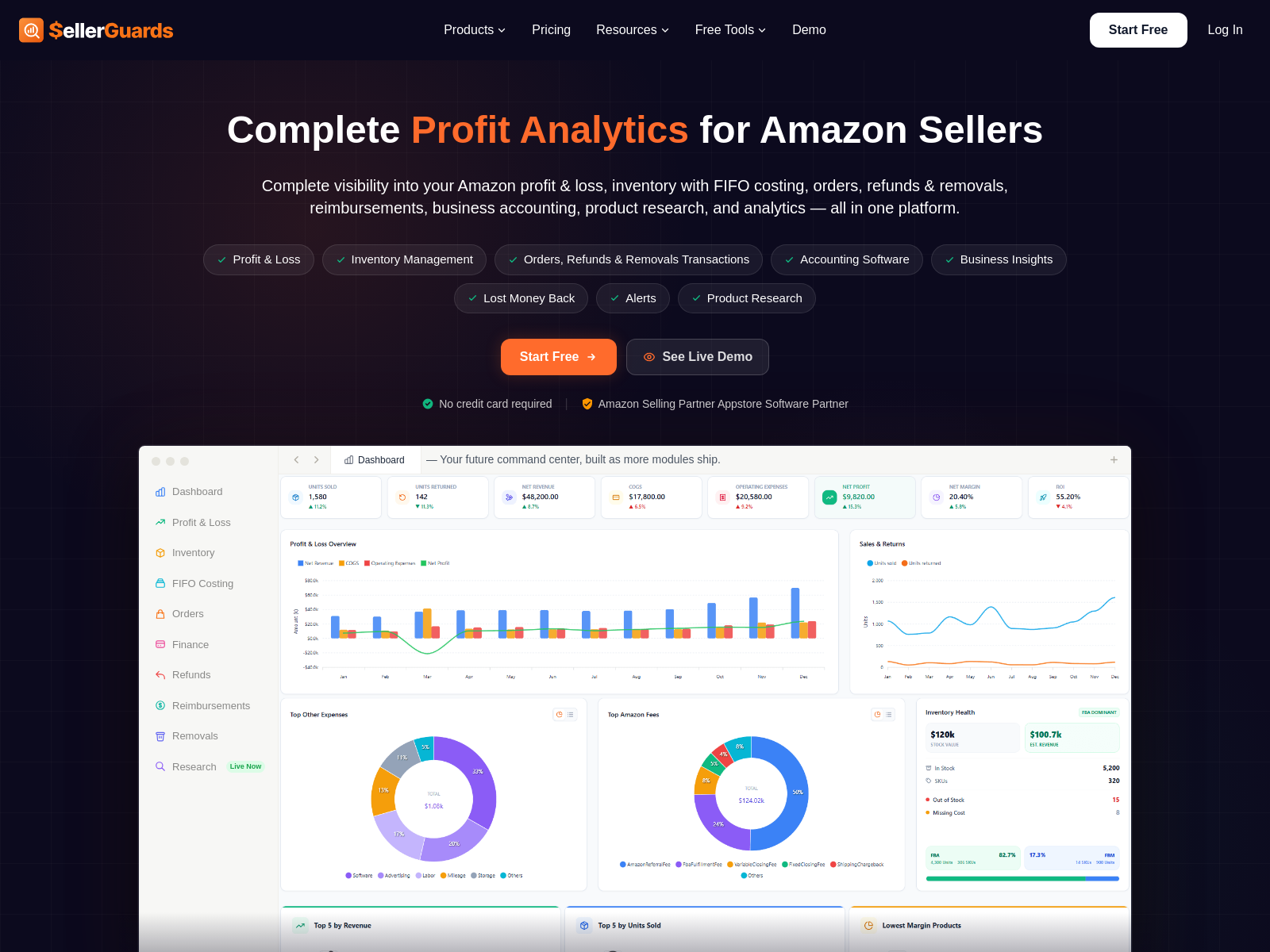

SellerGuards

SellerGuards is a tool for Amazon sellers that provides accurate profit calculation, competitor analysis, and inventory management based on the Amazon Selling Partner API.

Textideo

Textideo is a newly launched video generation tool that offers free trial credits on registration and supports user feedback and experience testing.

Fluent

Fluent is a tool that tracks filler words in real time, highlights them with red markers, and combines AI coach analysis to help users reduce filler-word usage.

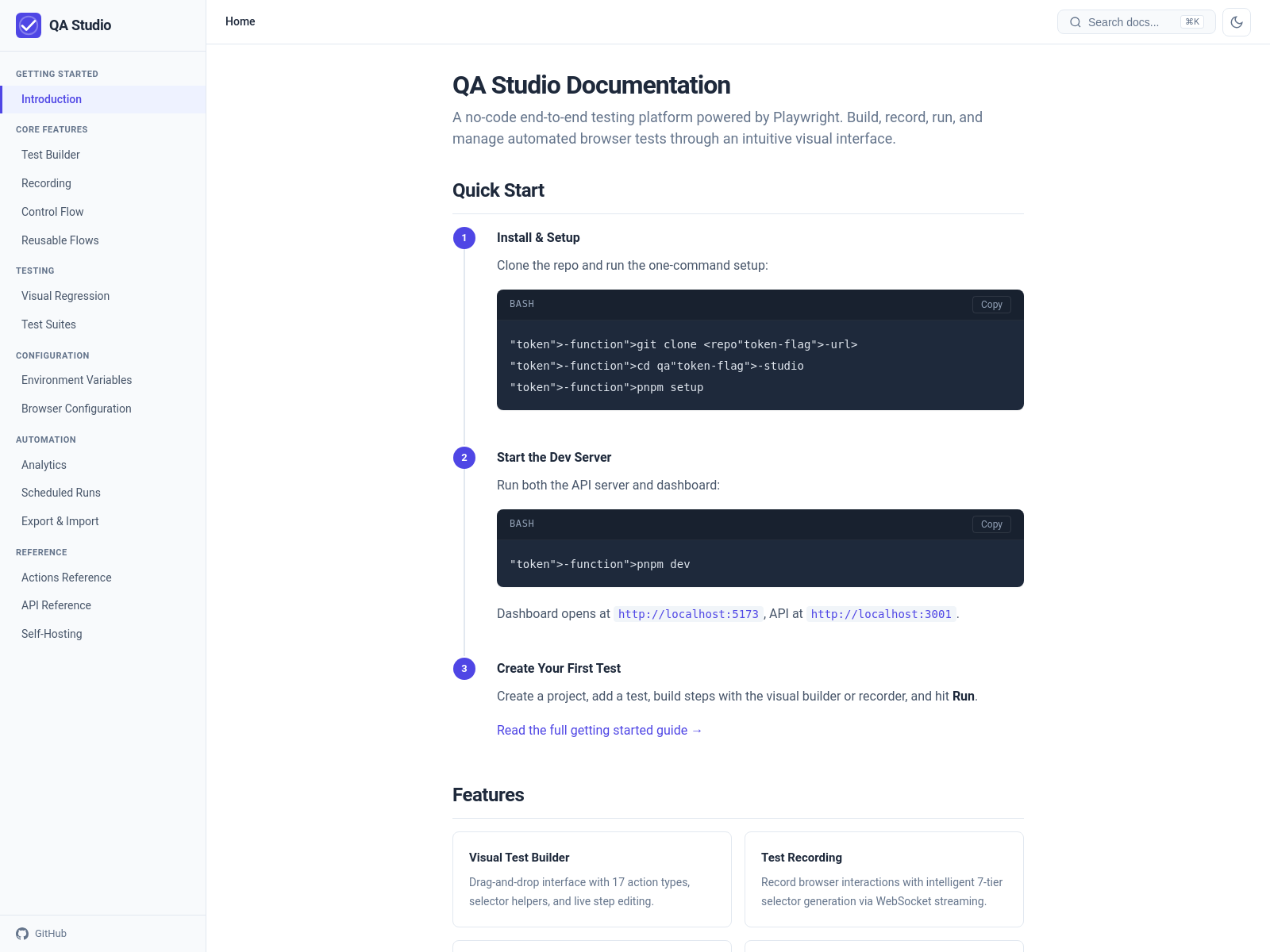

QA Studio

QA Studio is an open-source end-to-end testing tool that automatically generates test cases by recording user actions, supporting visual building and cross-browser testing.

homeassistion

homeassistion is local software written in Rust that bridges Mijia devices to HomeKit through MQTT connection to the Mijia central gateway, supports cloud access, and has been running stably for a month.

🎮 Games

Hollywood Link

Hollywood Link is an indie game combining retro style with soundtrack-driven gameplay, where players advance the story through musical rhythm.

Neon Dealer: Risk & Profit

Neon Dealer: Risk & Profit is a cyberpunk deck-building game built around the core question of “make one more deal or not,” creating tension through the balance of risk and reward.

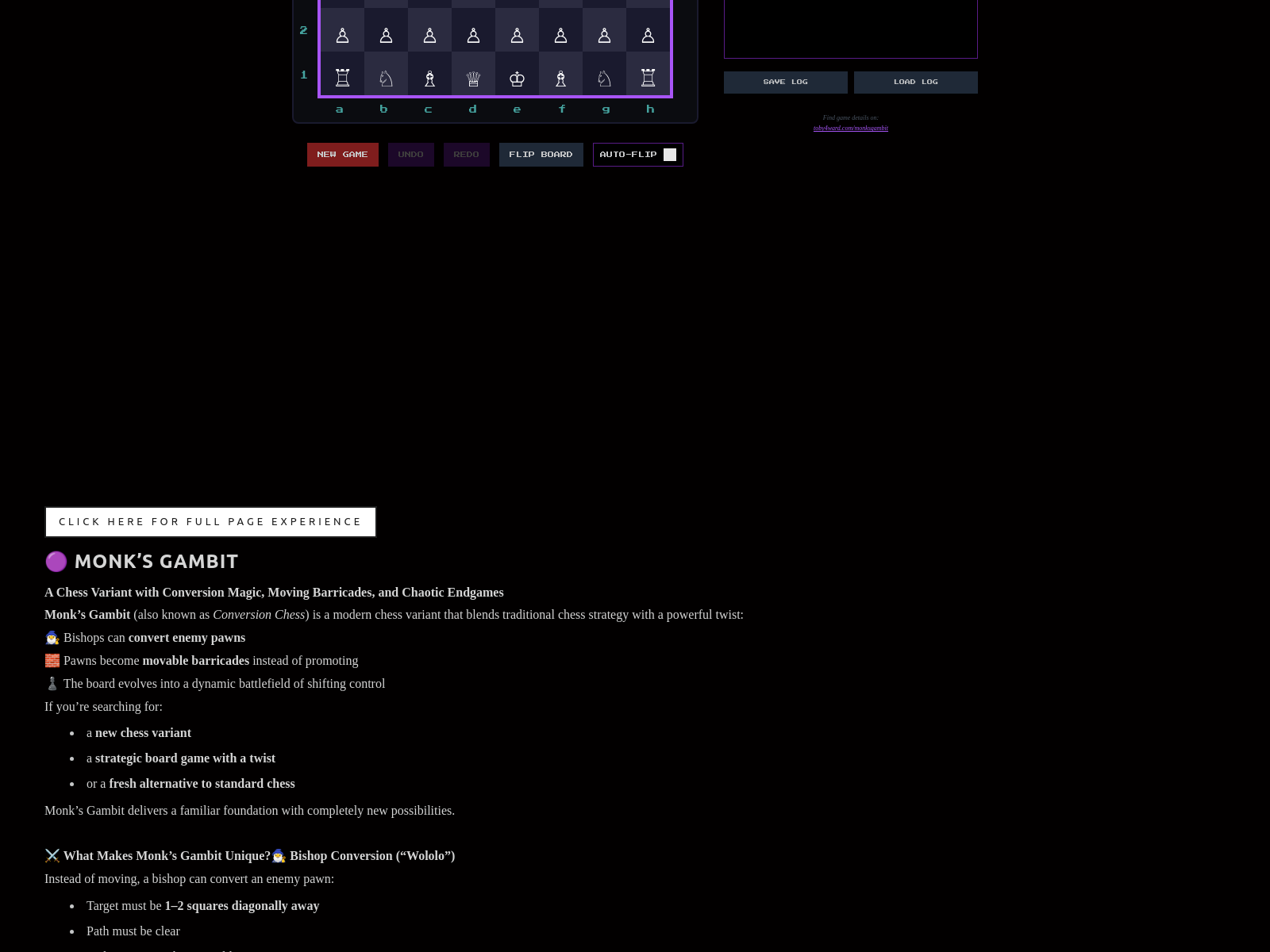

Monk’s Gambit

Monk’s Gambit is a chess-variant game developed with AI in 8 hours, supporting innovative rules such as monks converting enemy pawns and pawns becoming obstacles.

🌐 Websites

Killed by Google

Killed by Google is a data visualization website analyzing 299 discontinued Google products and revealing patterns in product retirement.

摩斯电码在线转换工具

摩斯电码在线转换工具 is an online Morse code conversion tool that supports text-to-Morse conversion plus audio and light playback effects.

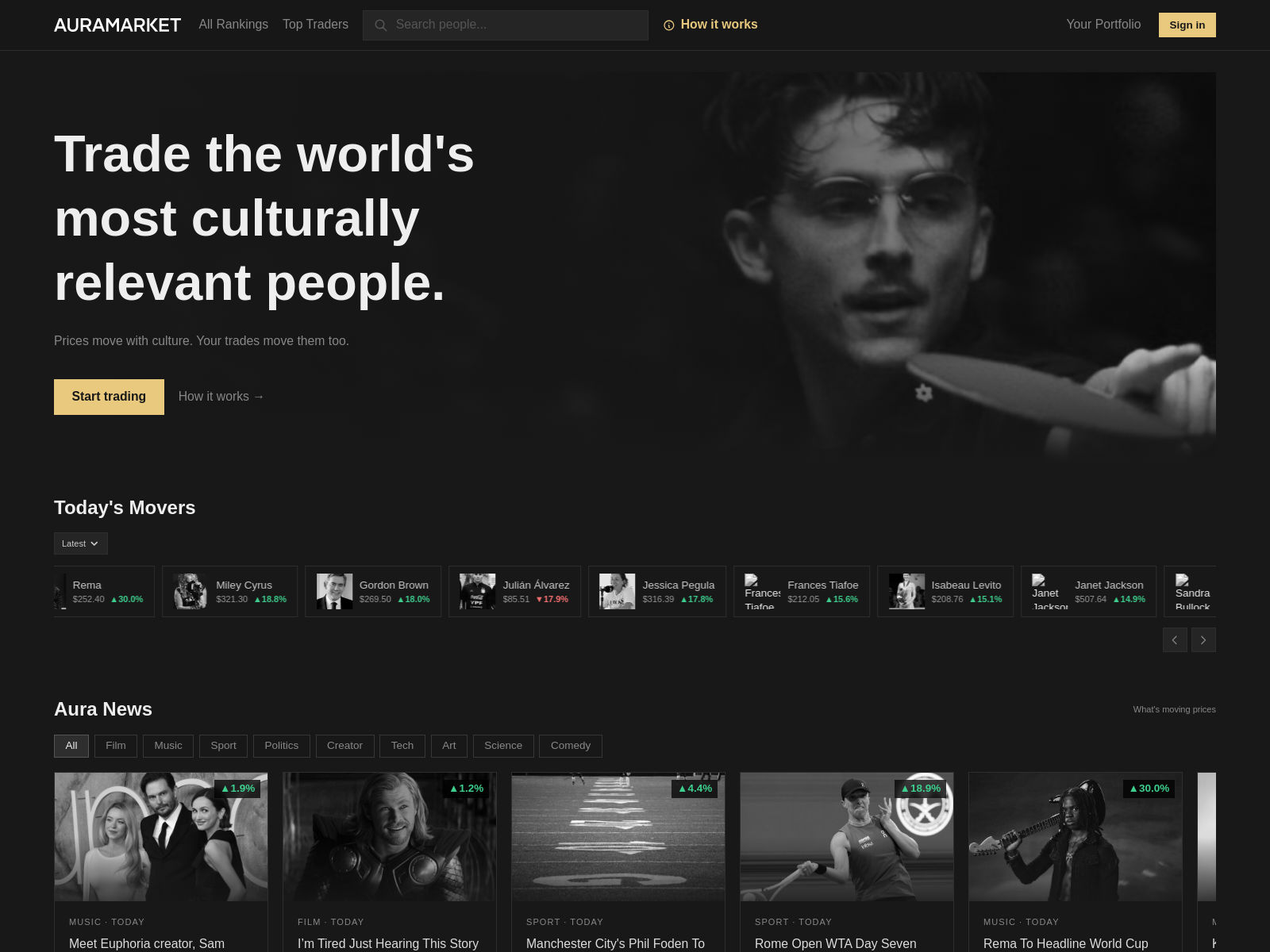

AuraMarket

AuraMarket is a virtual stock market based on cultural attention, allowing users to trade influence shares of public figures and reflect their social attention in real time.

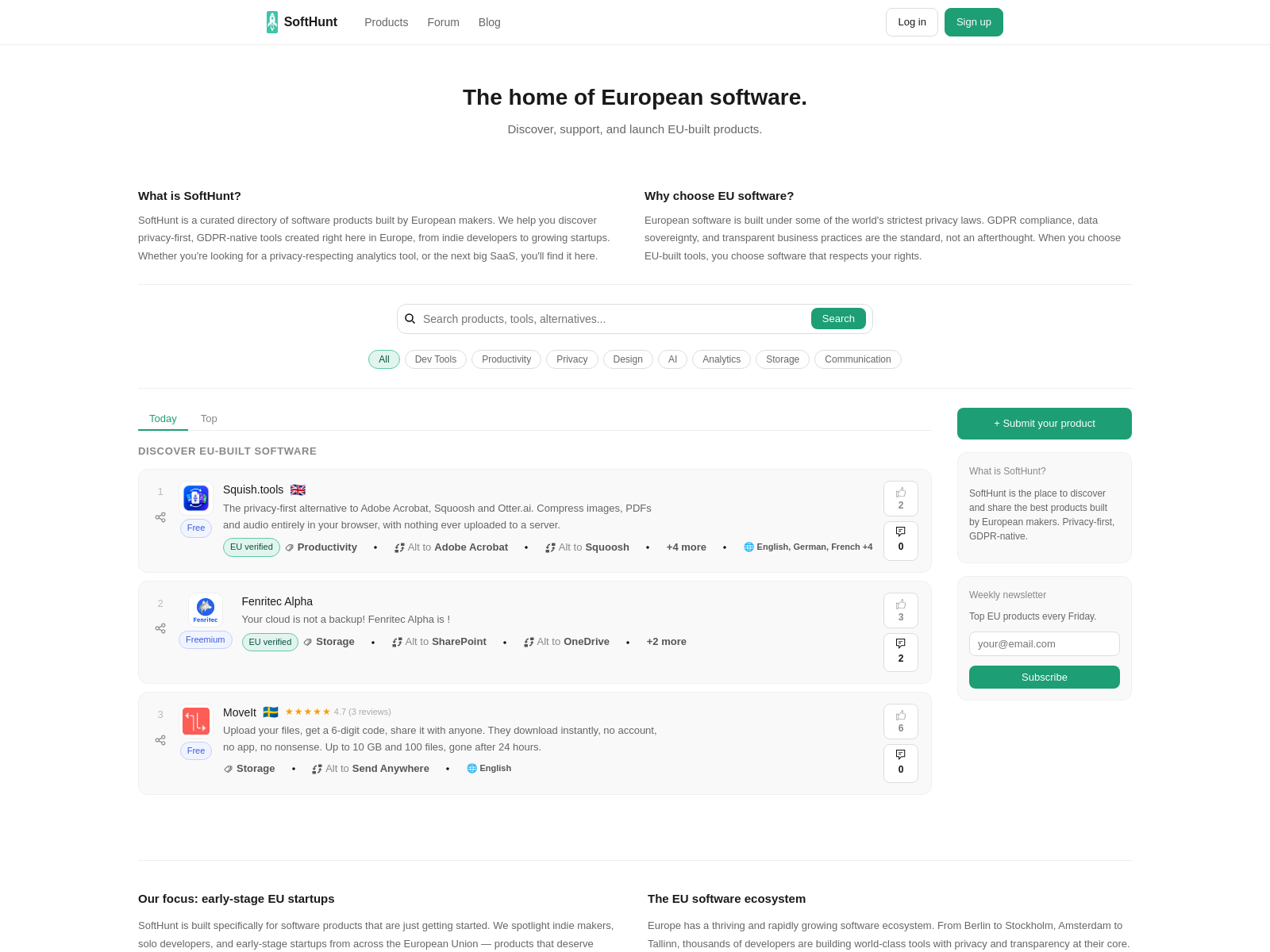

LaunchEU

LaunchEU is a discovery platform focused on European software. It supports European developers submitting products, community voting, EU certification badges, and promotion of privacy-friendly, GDPR-compliant local tools.

✍️ Notes

Daily project information:

Website: https://www.nomoyu.com/

RSS: https://www.nomoyu.com/rss/rss.xml

WeChat Official Account: 明航的AI副业

Feel free to connect and exchange ideas

See the website for all links.